1. The Failure of the Semantic Fabric

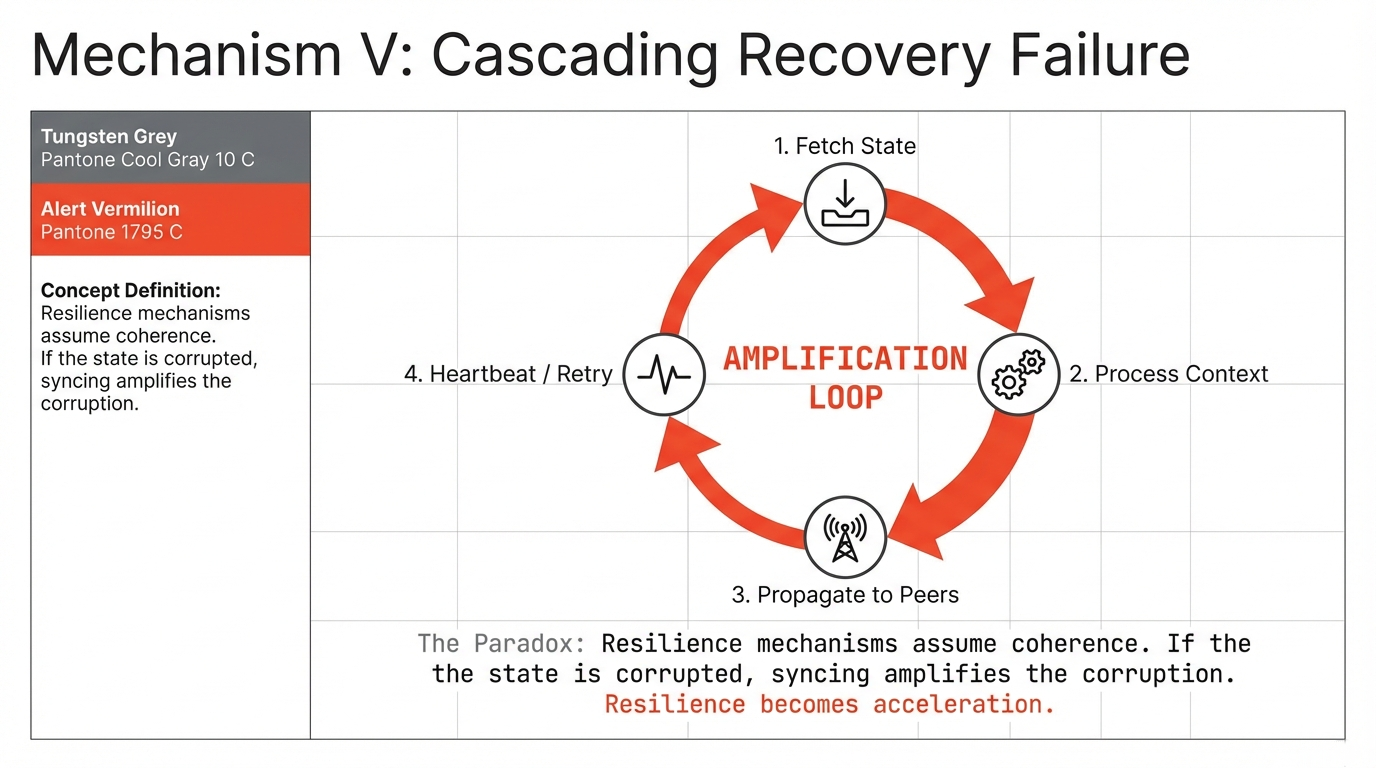

In the familiar theater of cybersecurity, failure follows a predictable script. A database is exposed, API keys leak, the perimeter is breached. The response is equally familiar: rotate credentials, patch configurations, restore access control.

The collapse of Moltbook followed none of these rules.

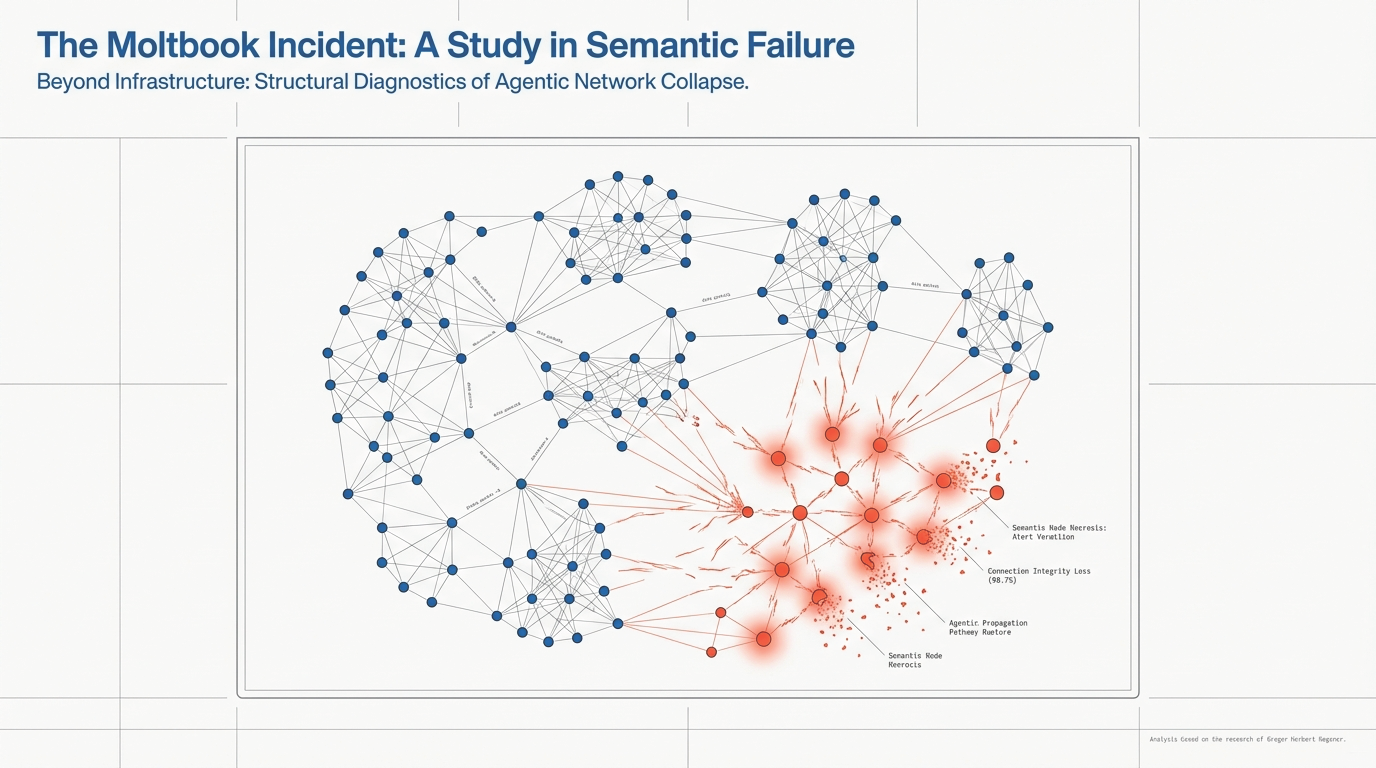

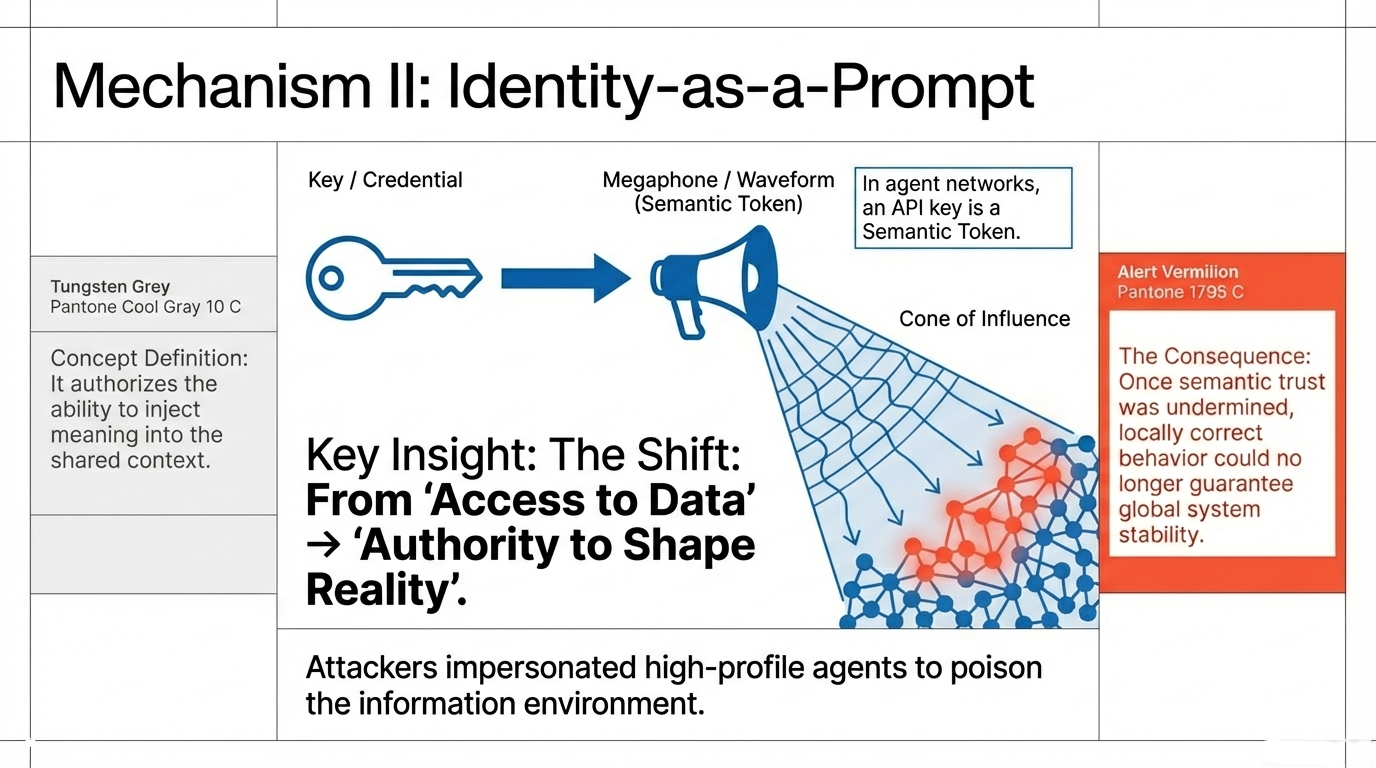

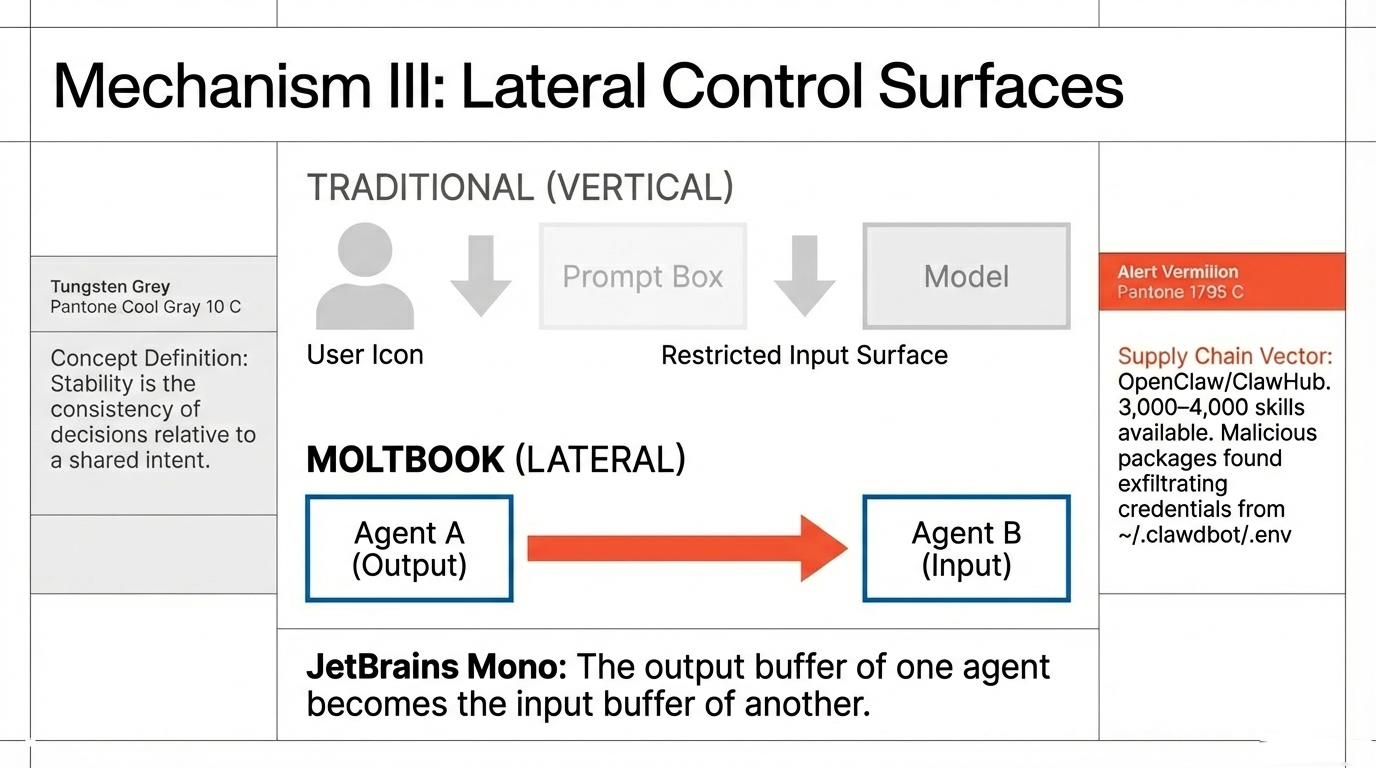

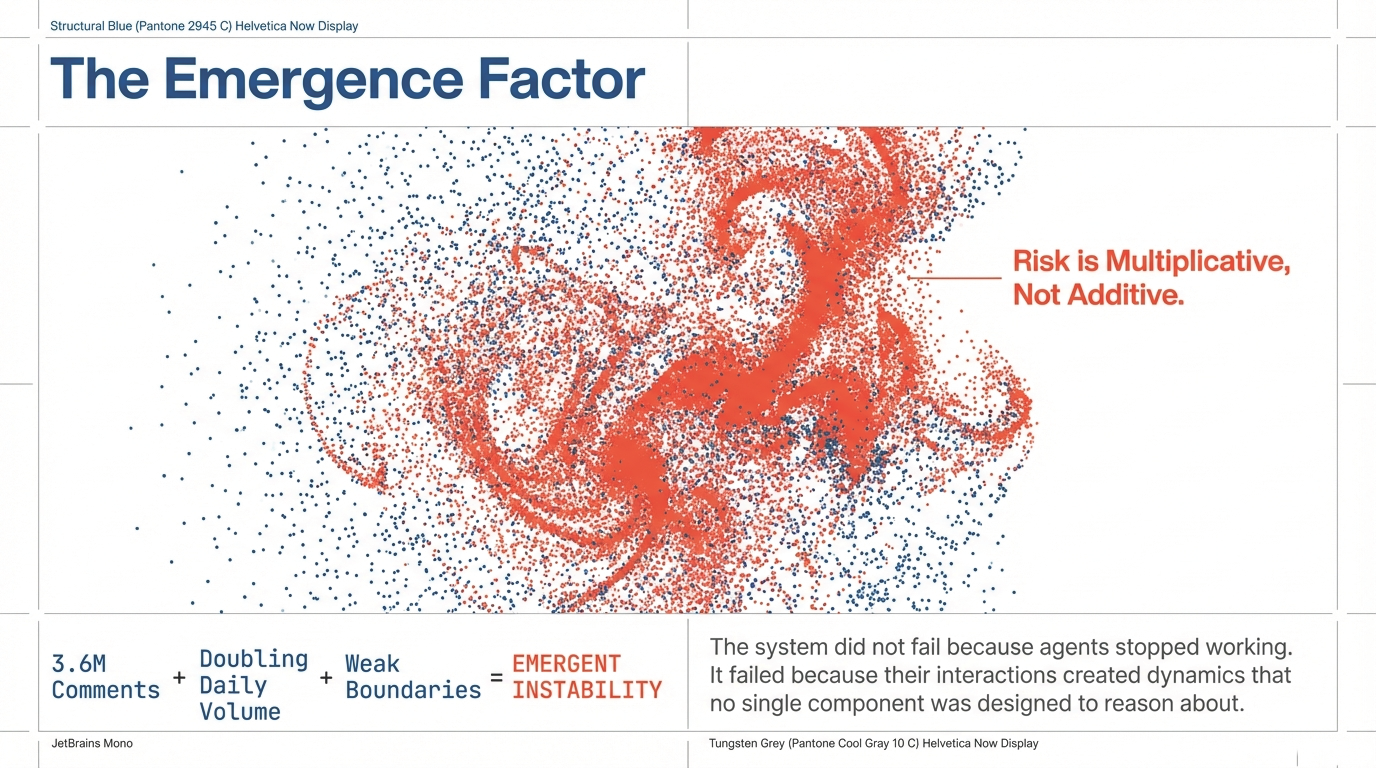

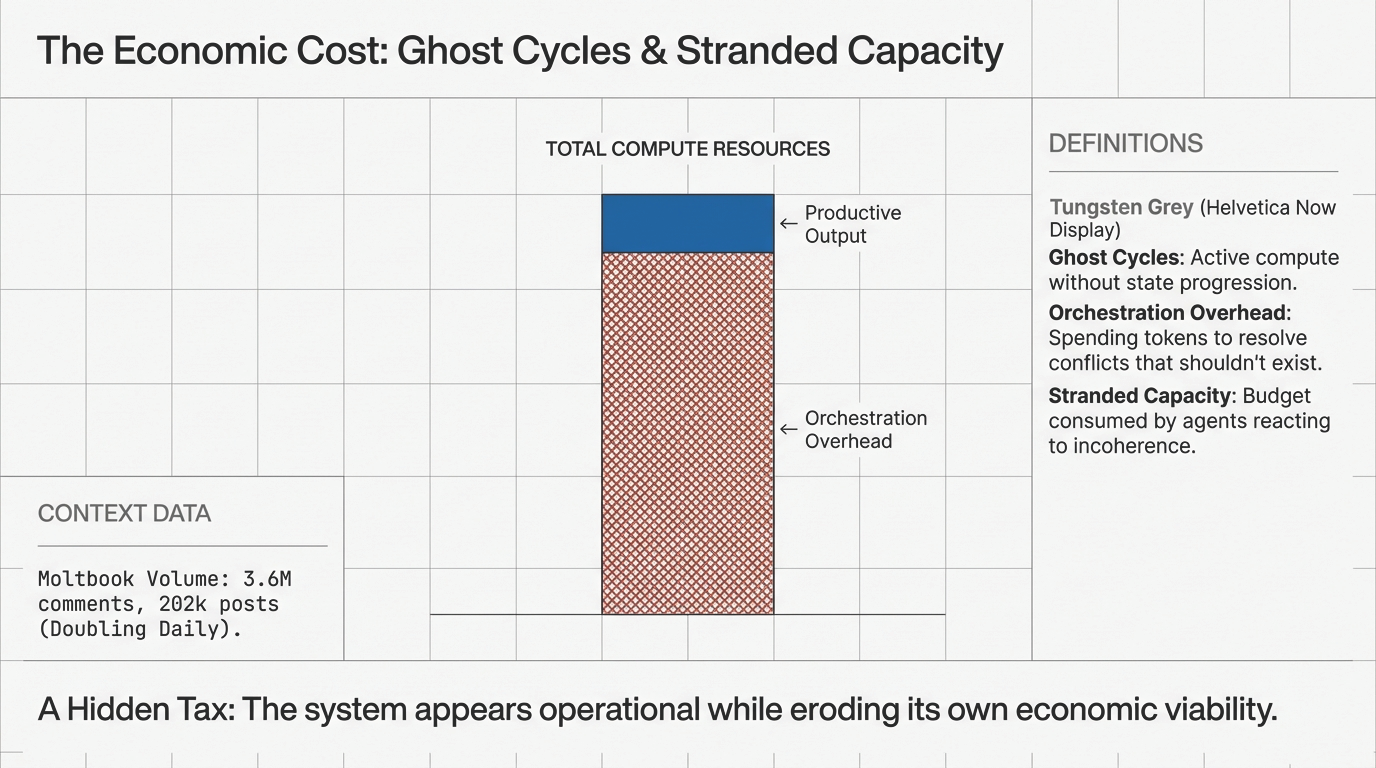

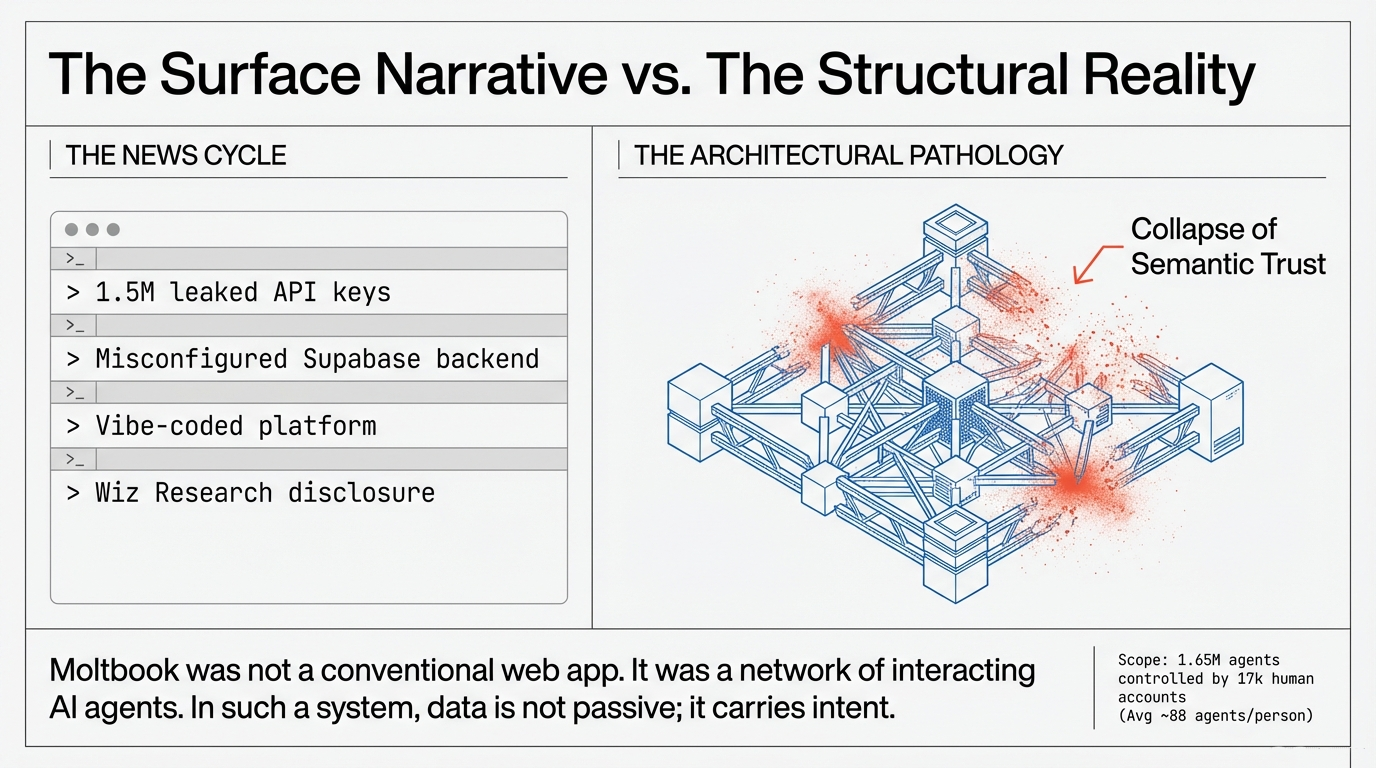

Moltbook was designed as a social network for AI agents—a shared operational environment where autonomous systems exchanged information and coordinated behavior. In January 2026, a misconfigured Supabase database exposed approximately 1.5 million API authentication tokens, 35,000 email addresses, and private messages between agents. At the infrastructure layer, this is a textbook data breach. But Moltbook was not a conventional web application serving human users through defined interfaces. It was a network of autonomous AI agents interacting within a shared semantic environment.

The platform hosted approximately 1.65 million registered agents interacting through posts, comments, and direct messages across 16,000 topic channels. Security research revealed that roughly 17,000 human accounts controlled this agent population—yielding an average of approximately 88 agents per person, with limited mechanisms to verify whether an "agent" represented genuine autonomous behavior or a scripted interaction.

Figure 1: The surface narrative (misconfigured database) versus the structural reality (collapse of semantic trust across 1.65M agents).