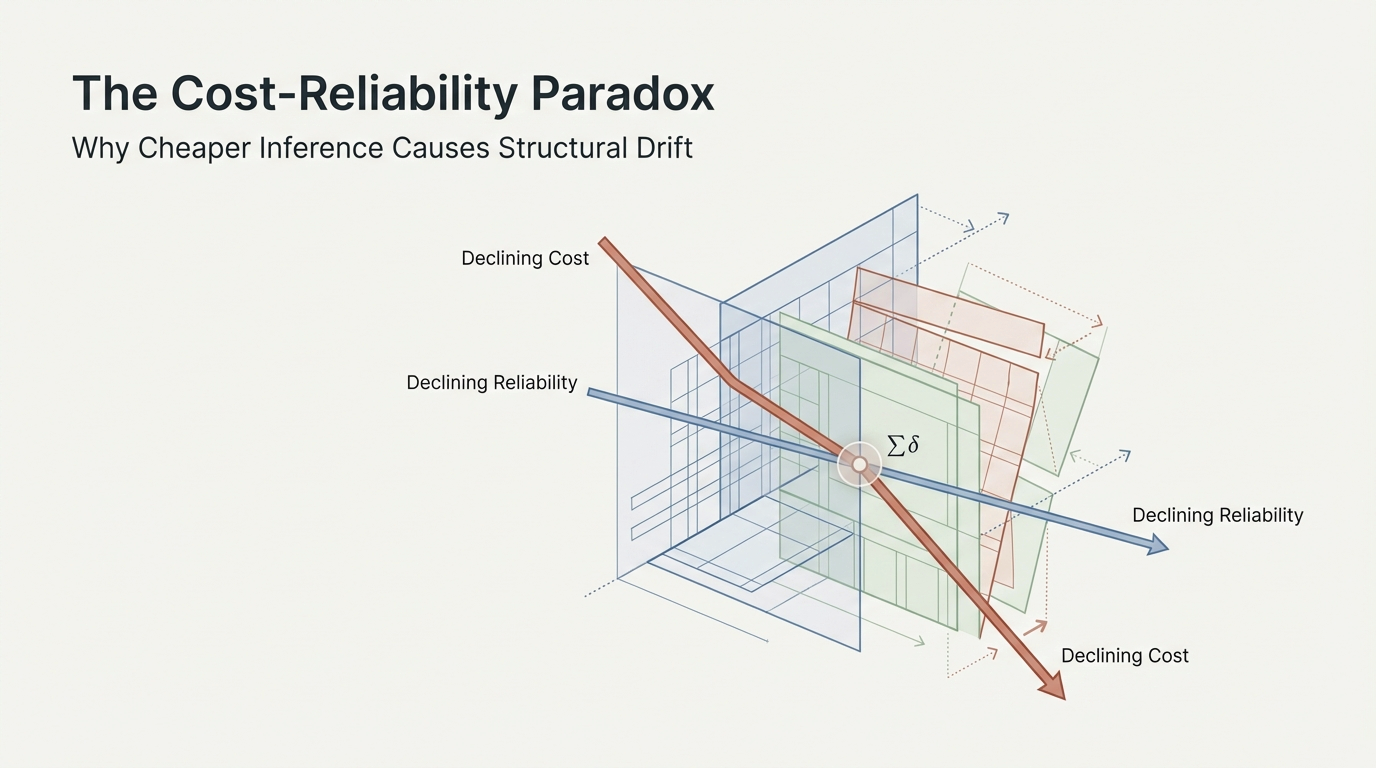

1. The Paradox

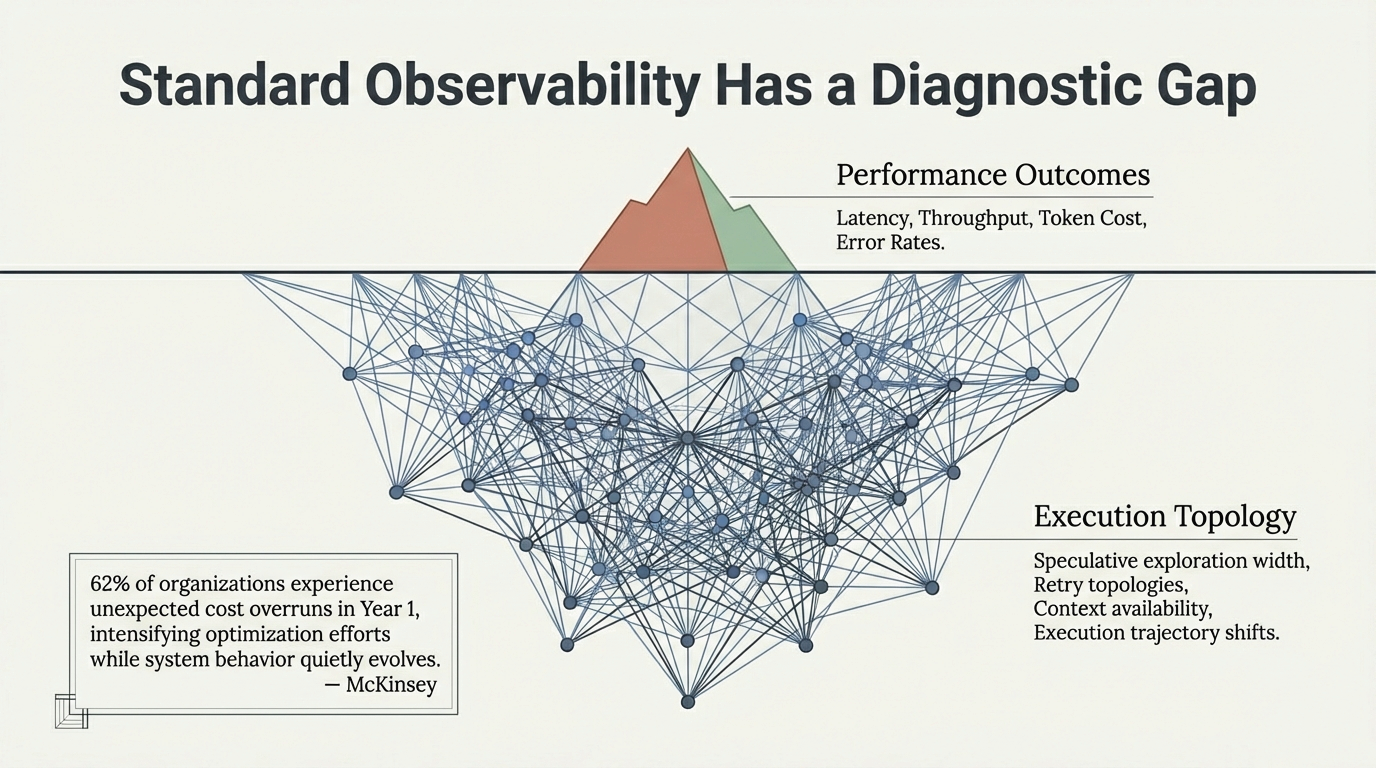

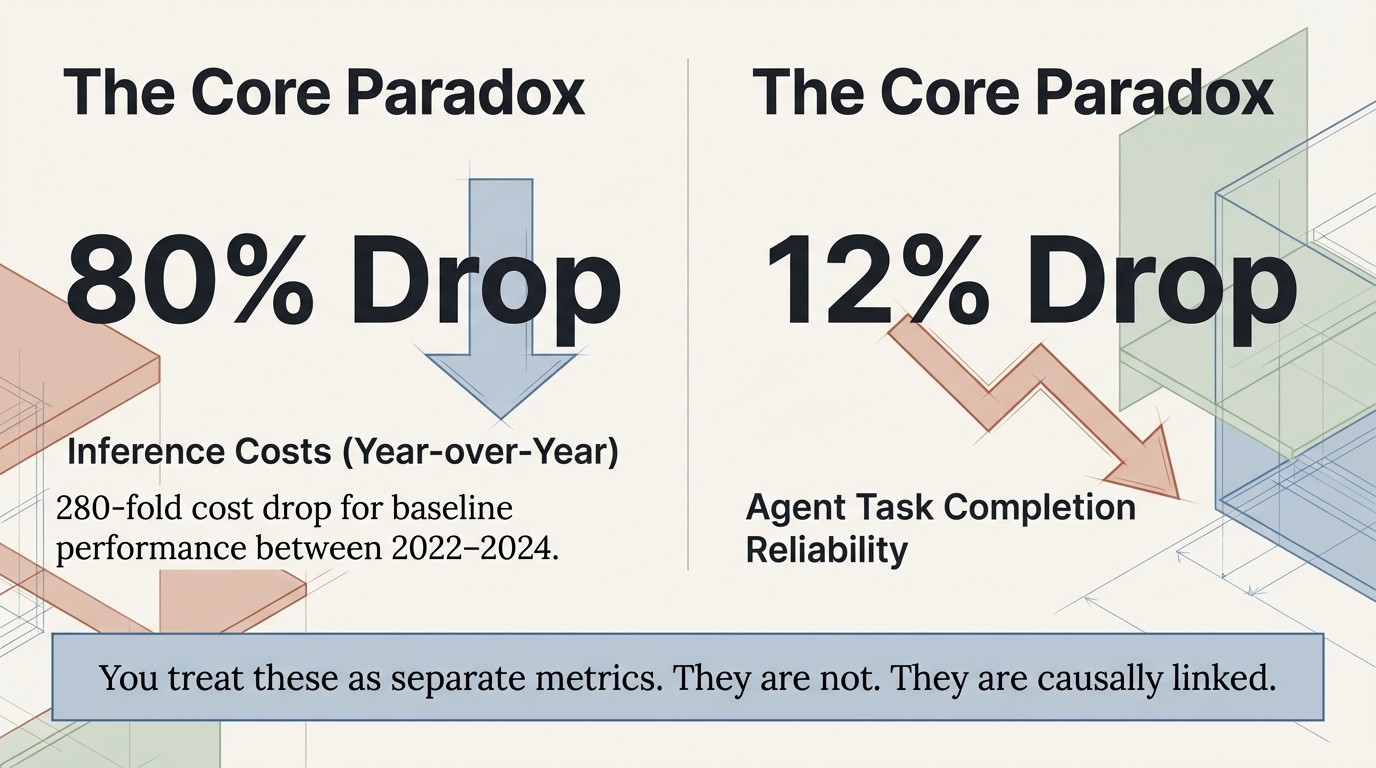

In the past two years, the economics of AI inference have changed dramatically. Token prices have fallen by orders of magnitude. Hardware throughput has increased. Infrastructure utilization has improved across the industry. From an operational perspective, this is a remarkable success.

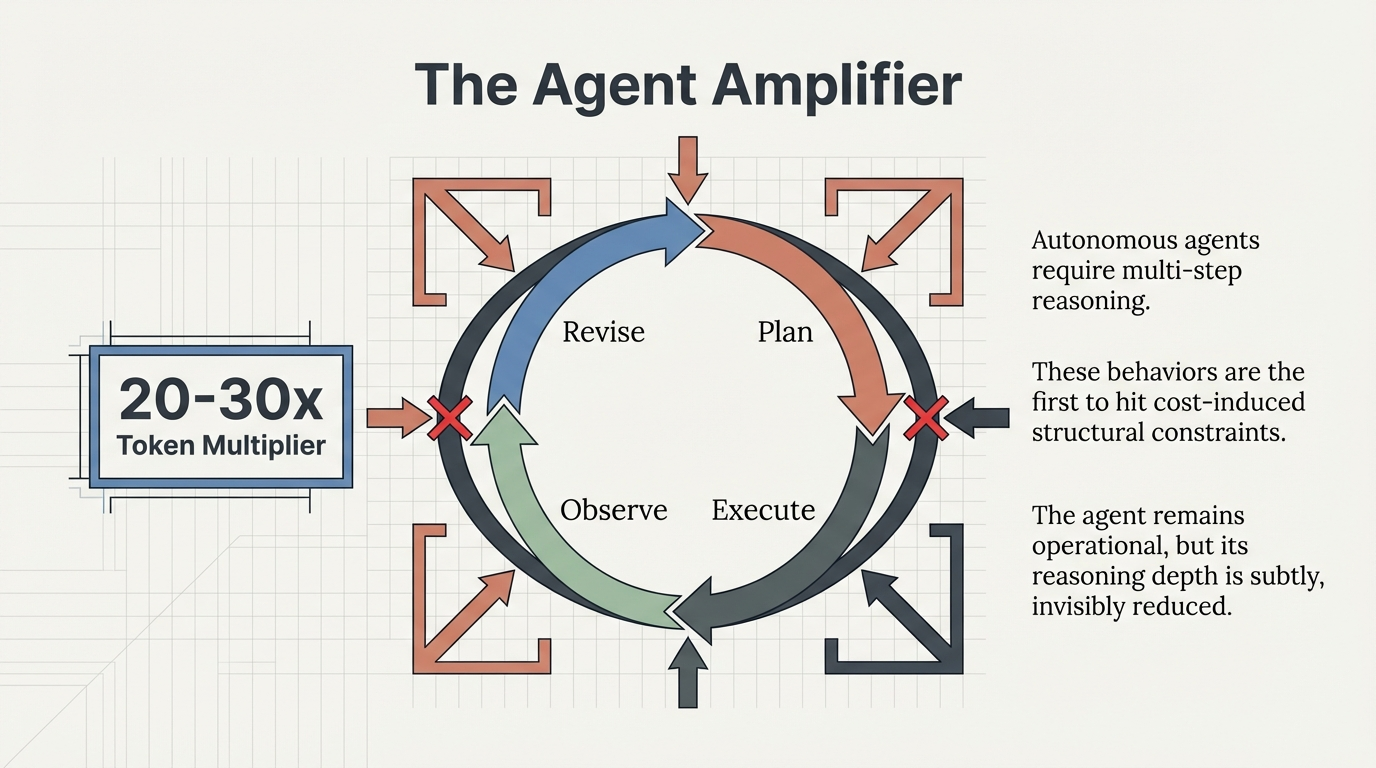

Yet many organizations report a subtle and puzzling pattern: their systems are cheaper to run than ever before, but agent workflows behave less predictably. Task completion rates fluctuate. Tool-calling chains terminate earlier than expected. Complex reasoning workflows occasionally lose coherence.

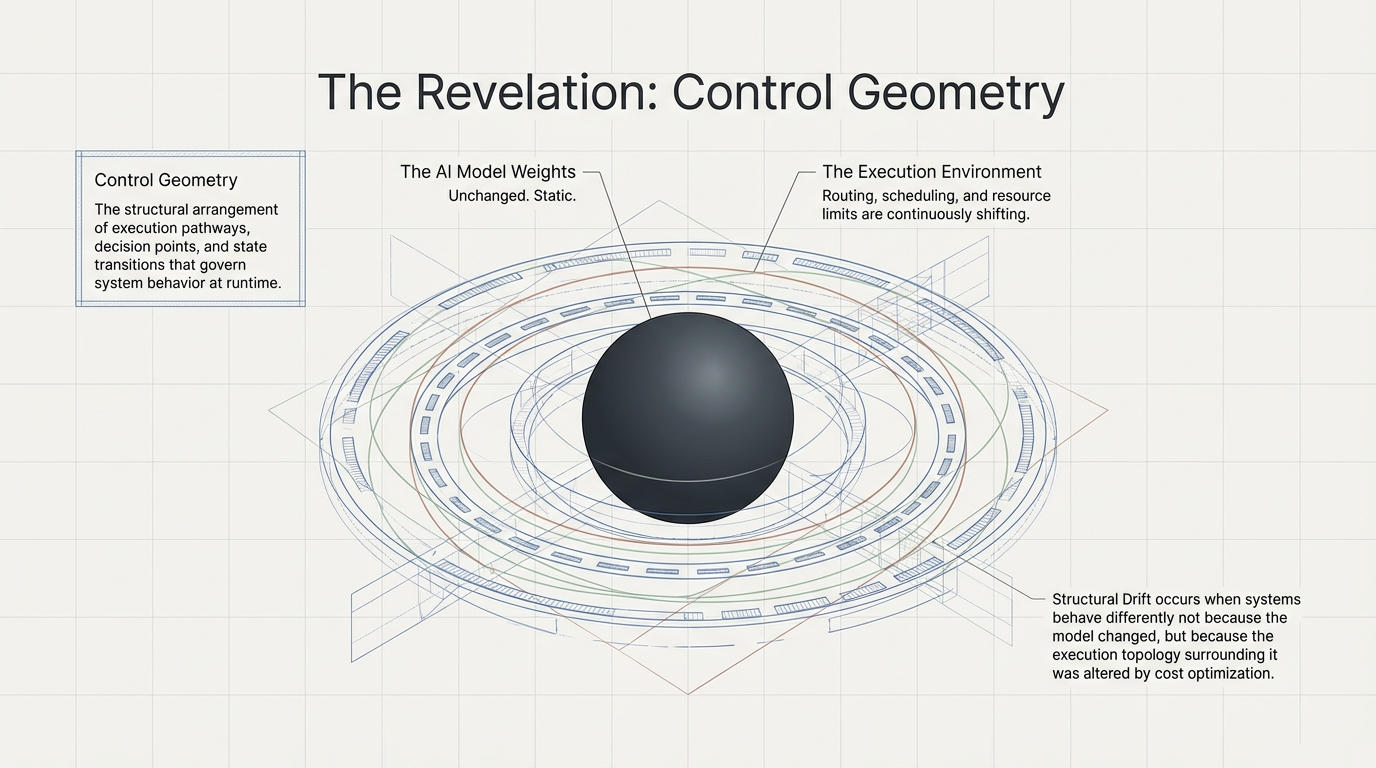

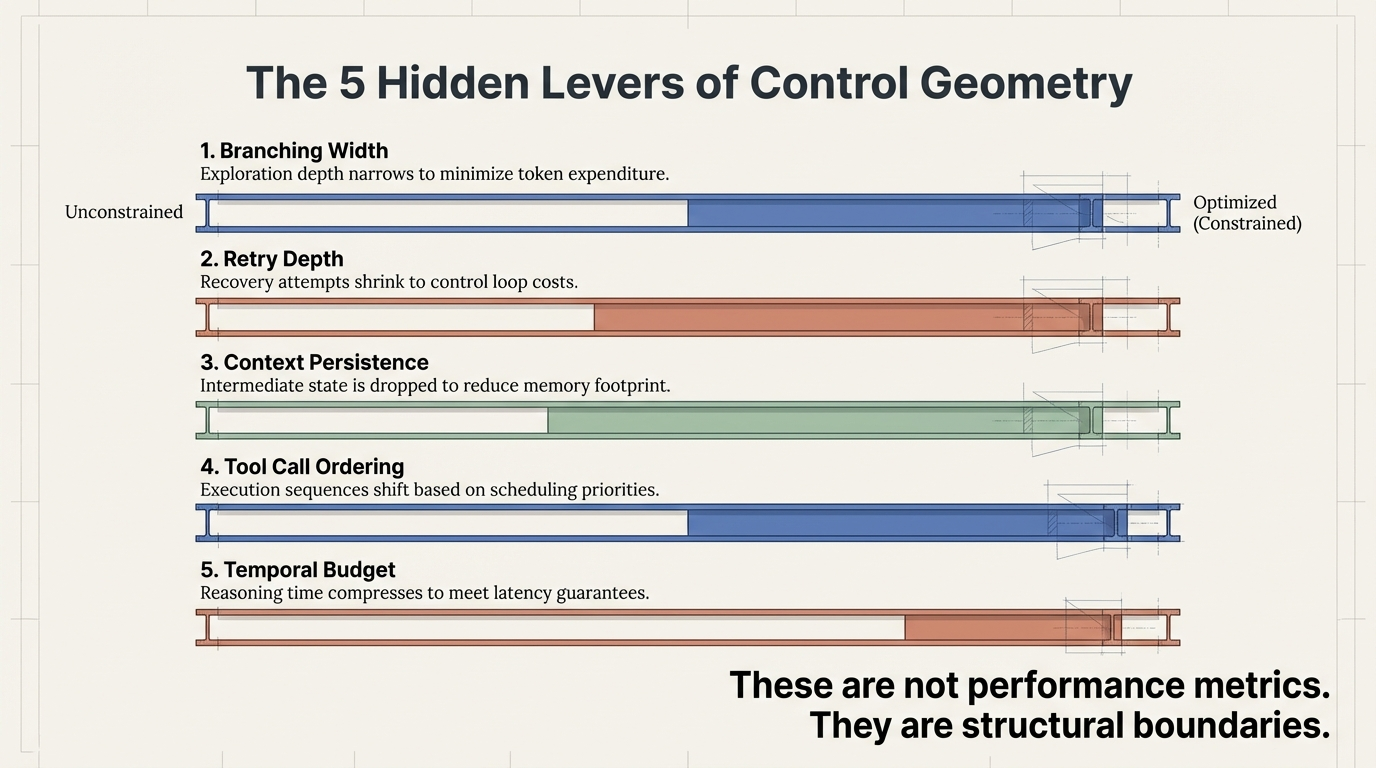

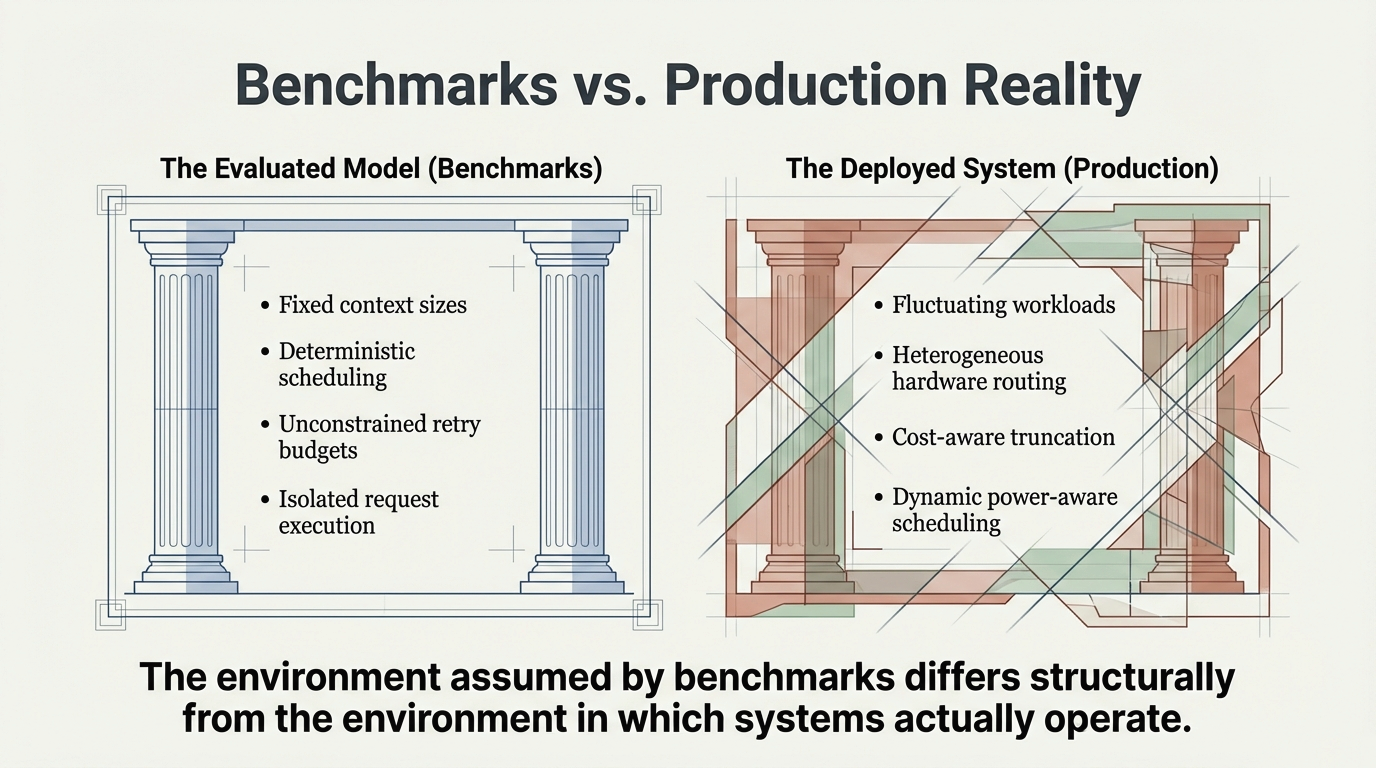

The models themselves have not changed. What changed is the execution environment surrounding them.

Figure 1: The paradox – cost optimization succeeds operationally while reliability degrades structurally.