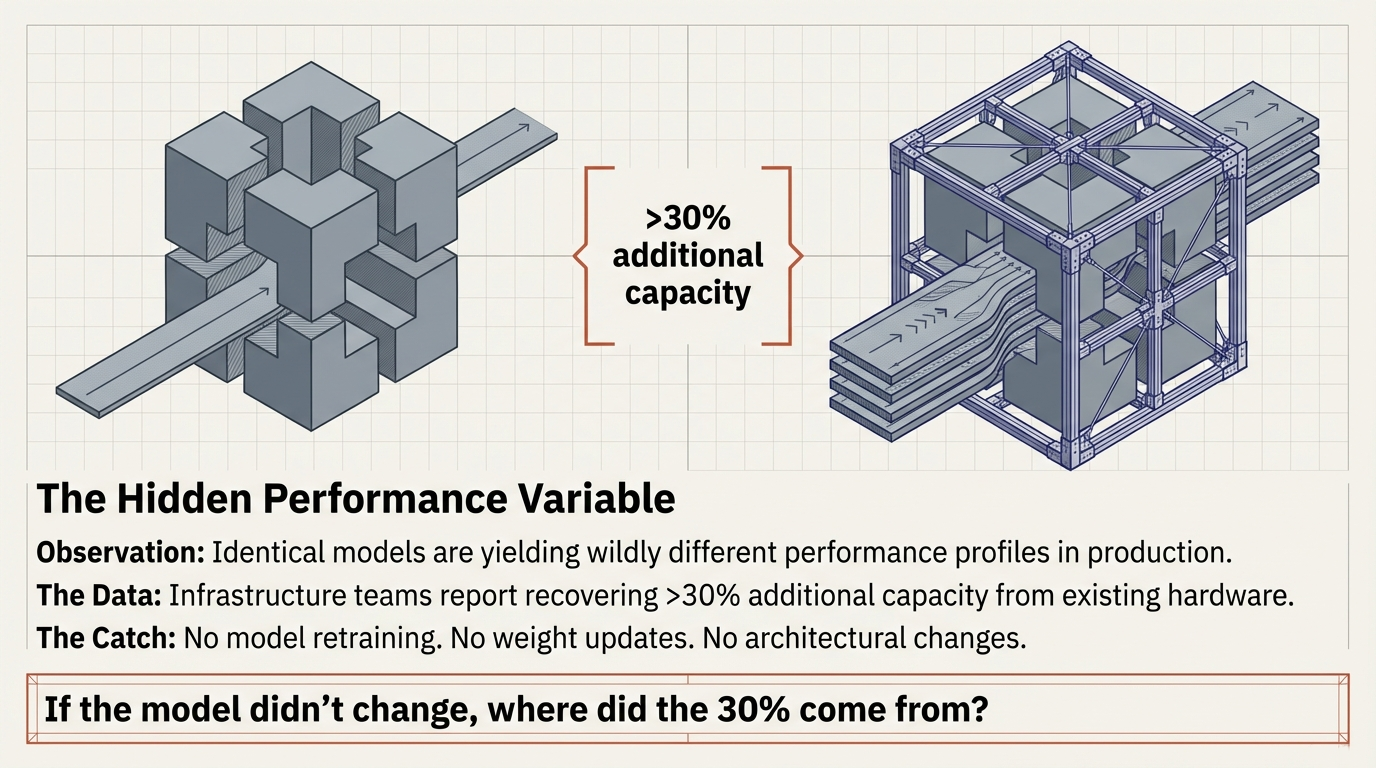

1. The Hidden Performance Variable

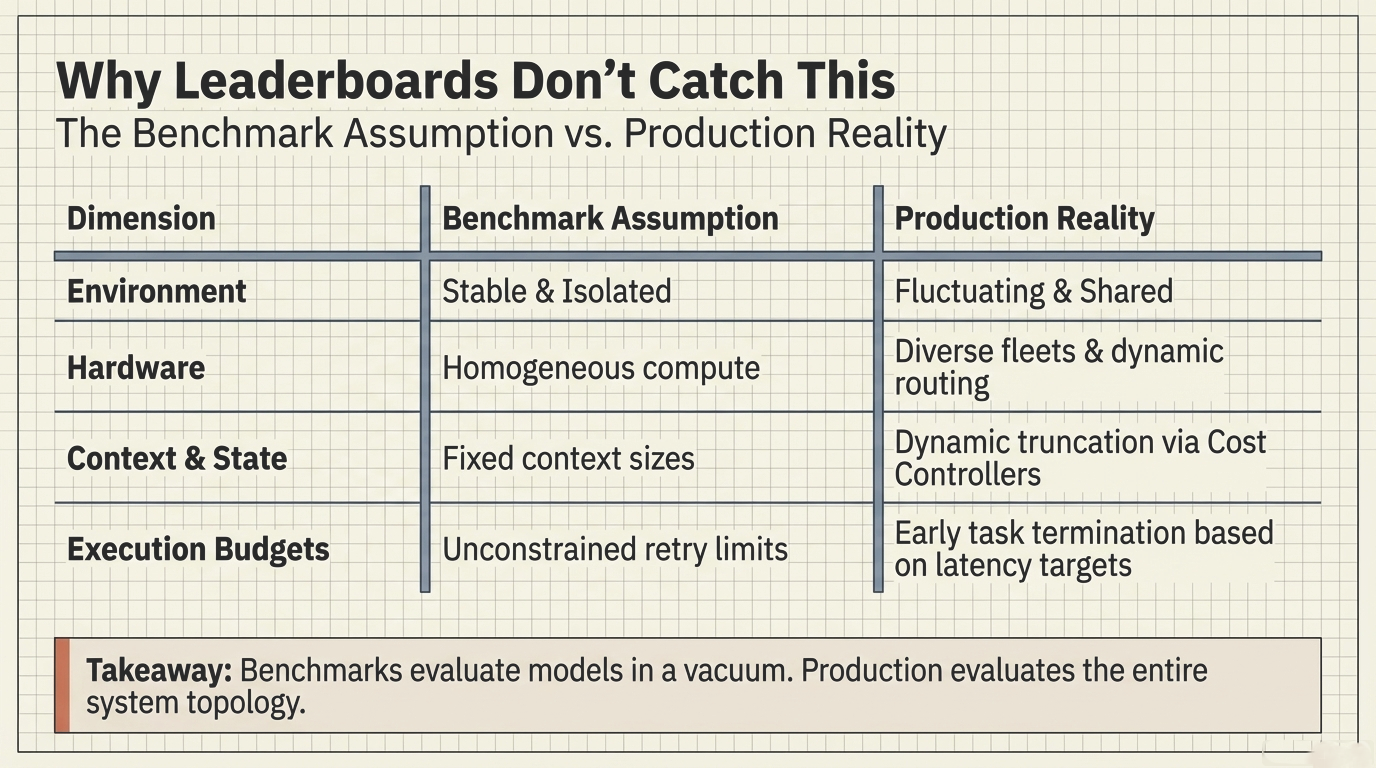

Modern AI systems are typically evaluated through the lens of model capability. Benchmarks measure reasoning ability. Leaderboards compare architectures. Research papers focus on parameter counts, training data, and evaluation scores.

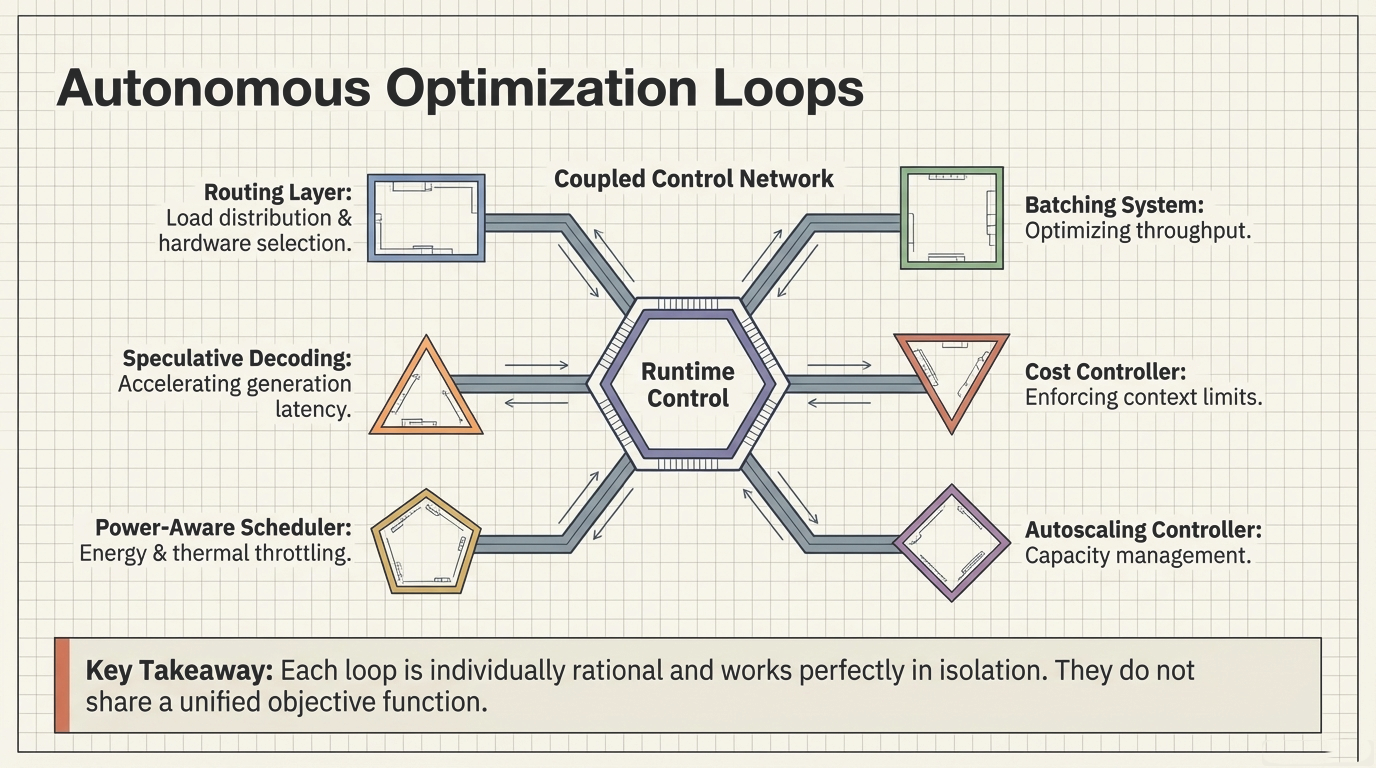

Yet in large-scale deployments, something different has quietly become true: many of the most significant performance improvements no longer come from better models. They come from the runtime control layer—and in many systems, this layer remains the least visible part of the architecture.

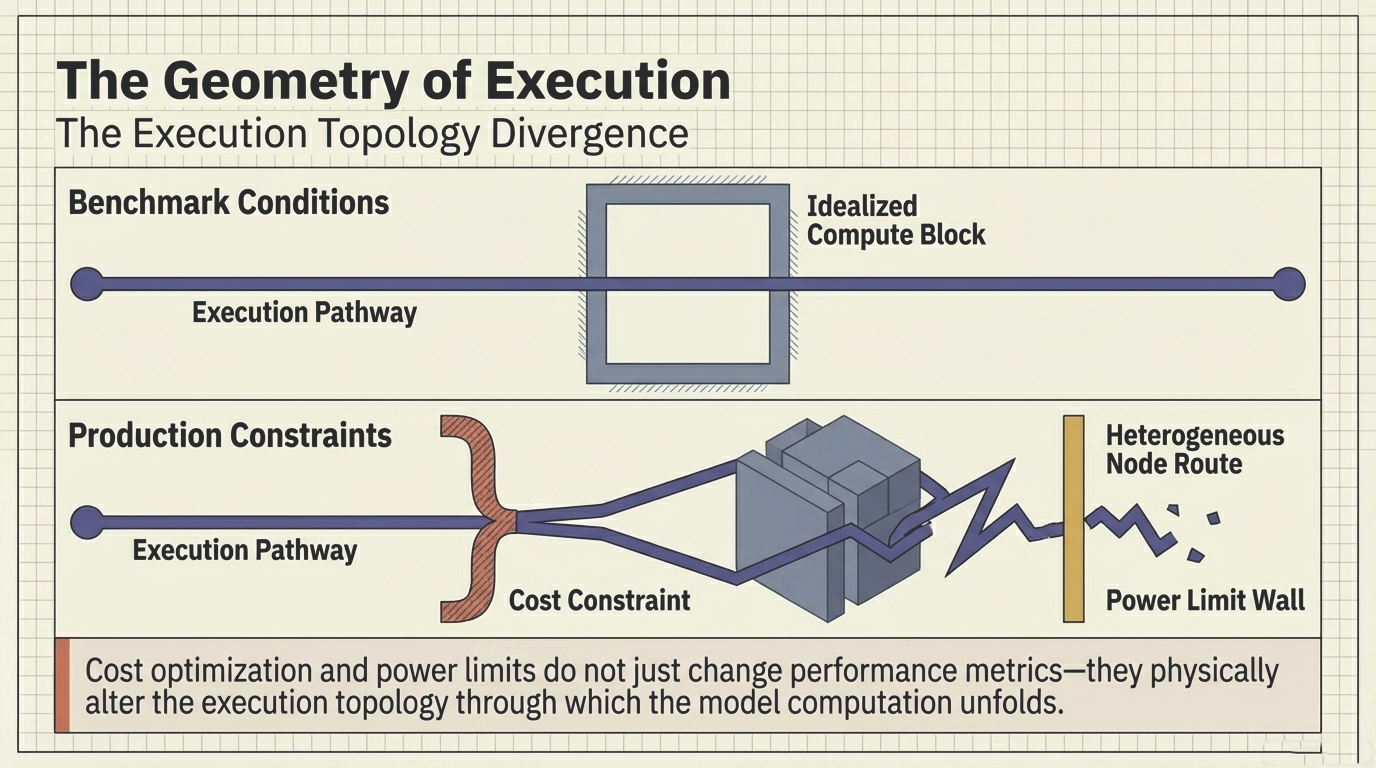

Figure 1: The hidden performance variable—identical models yield different performance profiles in production. Infrastructure alignment alone has recovered more than 30% additional capacity.

Infrastructure teams across the industry have reported significant throughput gains after refining runtime scheduling and inference orchestration. In some cases, infrastructure alignment alone has recovered more than 30 percent additional capacity from existing hardware. No model retraining was required. No architectural changes to the neural network were necessary. The improvement emerged entirely from better coordination of the runtime control layer.